9-Point Validation: How to Eliminate Result Processing Errors in University Exams

Learn how automated result processing with 9-point error validation eliminates totalling errors, catches score discrepancies, and ensures error-free result publication for university exams.

The Cost of Result Processing Errors

A single totalling error in a university exam can change a student's grade, delay their graduation, or trigger a costly re-evaluation exercise. Multiply that across lakhs of answer books, and result processing errors become a systemic problem.

Traditional result processing involves:

Every step is a point of failure. Manual totalling errors. Data entry typos. Verification fatigue. Excel formula mistakes. It's no surprise that universities regularly discover errors after results are published — leading to revised results, student protests, and media embarrassment.

What is 9-Point Validation?

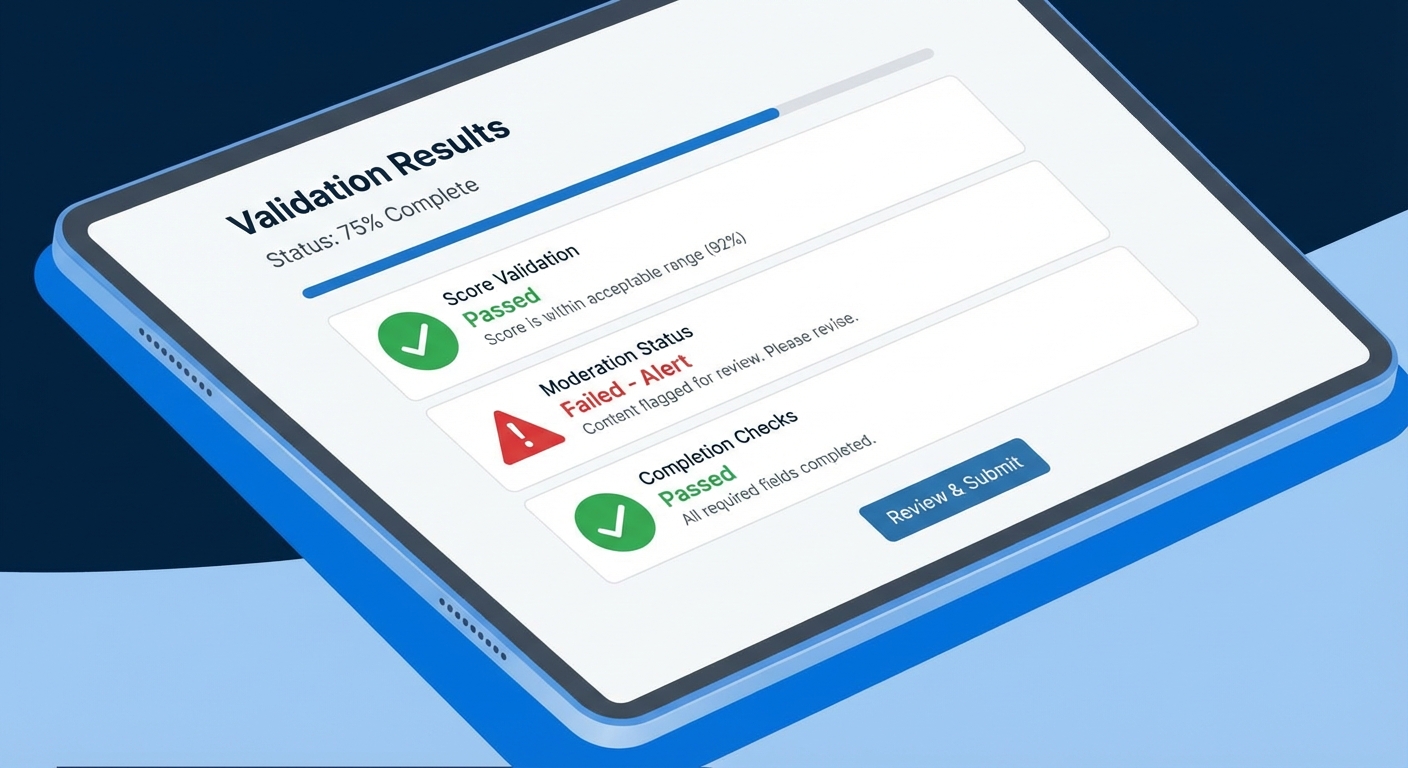

MAPLES OSM's result processing module runs a 9-point automated validation on every answer book before results are published. Each check catches a specific category of error that would otherwise slip through manual processes.

Point 1: Unevaluated Questions

The system checks that marks have been entered for every question in the question paper structure. If Q3(b) is blank, the booklet is flagged — it might mean the evaluator missed a question, or the student didn't attempt it (in which case the evaluator should have entered 0).

Point 2: Score Exceeding Maximum Marks

Individual question marks are validated against the maximum marks defined in the question paper. If Q1 has a maximum of 10 marks and the evaluator entered 12, the booklet is flagged instantly. This is a surprisingly common error in paper-based evaluation where evaluators write marks without reference to a marks scheme.

Point 3: Sub-Question Total Mismatches

For questions with sub-parts (Q1a, Q1b, Q1c), the system verifies that the sub-question marks add up to the question total. If Q1a=3, Q1b=4, Q1c=5, but Q1 total is recorded as 11 instead of 12, the discrepancy is caught.

Point 4: Missing Moderator Approval

Every answer book that requires moderation must have moderator sign-off before entering result processing. Books that bypassed the moderation stage — whether by accident or system error — are flagged and routed back to the moderation queue.

Point 5: Duplicate Evaluations Without Resolution

If the same answer book was evaluated by two evaluators (intentionally, for quality assurance), the system checks that the duplicate evaluation has been resolved. Unresolved duplicates — where two different evaluators have given different marks and no moderator has adjudicated — are flagged.

Point 6: Score Discrepancies Between Evaluators

For subjects using multi-evaluator assessment, the system compares marks from different evaluators. If Evaluator A gave 65/100 and Evaluator B gave 42/100, the 23-mark discrepancy is flagged for moderator review. The threshold is configurable per institution.

Point 7: Booklets Still In Progress

Any answer book still showing "in progress" status — where an evaluator started but didn't finish — is excluded from result processing and flagged. This prevents partial evaluations from being published as final results.

Point 8: Unresolved QC Issues

If the quality control stage flagged pages in a booklet (blur, missing pages, cut-off content), and those issues were never resolved, the booklet is excluded from results. This prevents results being published for booklets where the evaluator might have been working with unreadable pages.

Point 9: Incomplete Batch Processing

The system verifies that all booklets in a batch have been processed. If batch BK-2026-042 has 50 booklets but only 48 have completed evaluation, the batch is flagged and the 2 missing booklets are identified.

Multi-Level Result Processing

Results can be processed at three levels:

Institution-Wide Processing

Process results for all subjects across all departments in a single operation. The 9-point validation runs across the entire dataset, and a summary report shows how many booklets passed validation vs how many were flagged.

Department-Level Processing

Process results for a single department. Useful when departments have different exam schedules or when one department is ready before others.

Subject-Level Processing

Process results for a single subject. The most granular option — often used when a specific subject had evaluation delays or issues that need to be resolved independently.

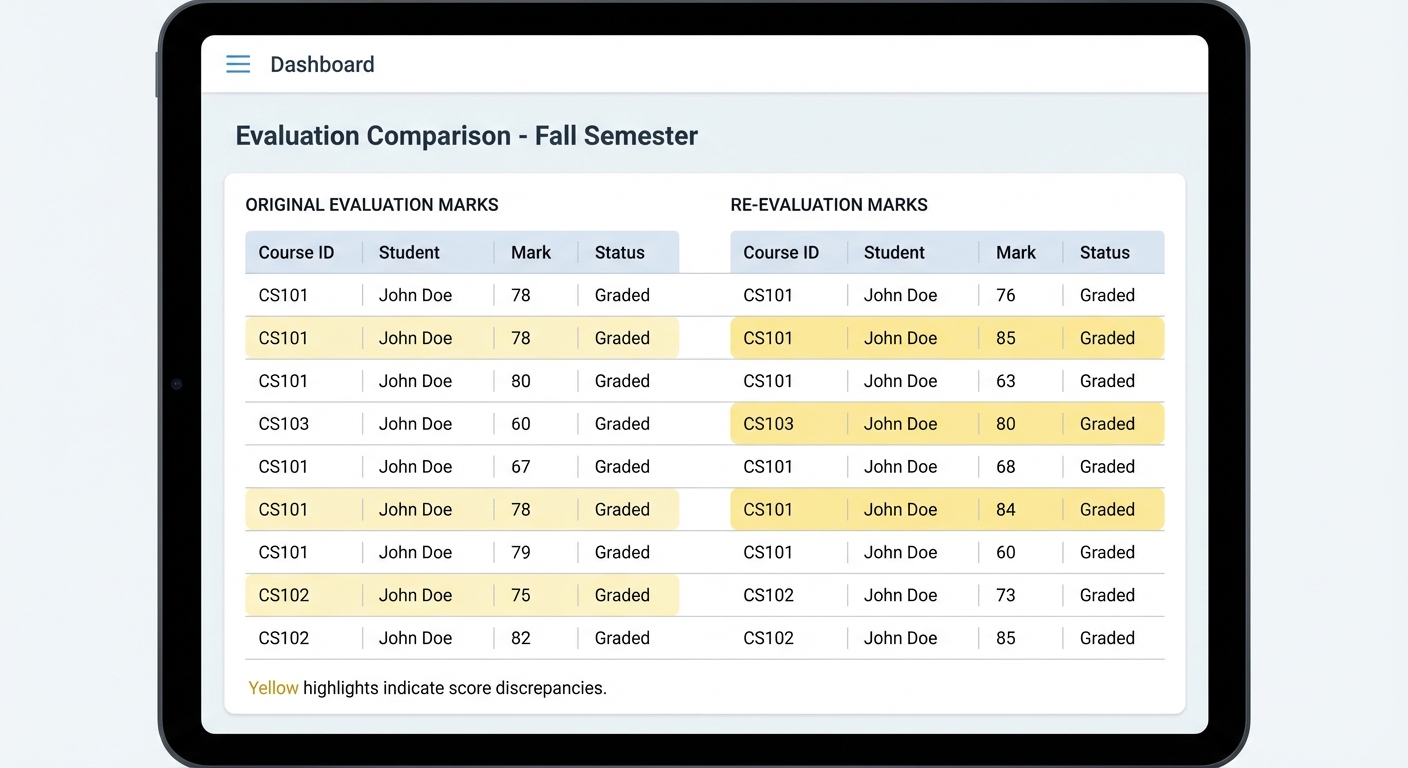

Re-Evaluation: Handling It Right

Re-evaluation requests are inevitable. A student is unhappy with their marks, pays the re-evaluation fee, and the institution must re-check the answer book.

In Paper-Based Systems

Re-evaluation is a logistical nightmare:

In MAPLES OSM

Re-evaluation is a few clicks:

The entire re-evaluation audit trail is preserved — who originally evaluated, who re-evaluated, what changed, and when.

Result Delivery and Transparency

Once results pass validation and are approved by administrators, they're published to students.

Student Result View

Result Delivery Tracking

The system tracks:

Automated Daily Reports

Throughout the evaluation cycle, the system generates automated daily progress reports sent via email to administrators:

These reports eliminate the need for daily status meetings and manual progress tracking.

The Impact: Error-Free Results at Scale

With 9-point validation, the most common result processing errors are caught automatically:

| Error Type | Paper System | MAPLES OSM |

|---|---|---|

| Totalling errors | 2-5% of booklets | 0% (automatic calculation) |

| Missing question marks | Caught at verification (if done) | Caught automatically |

| Exceeded maximum marks | Rarely caught before publication | Impossible (system prevents) |

| Incomplete evaluations | Discovered during result compilation | Flagged in real-time |

| Duplicate evaluation conflicts | Manual resolution | Automatic detection + routing |

For institutions evaluating 5,00,000+ answer books, even a 2% error rate means 10,000 booklets with errors. Automated validation eliminates this entirely.

Conclusion

Result processing is the final — and most visible — stage of the evaluation pipeline. An error here directly impacts students. 9-point automated validation, combined with multi-level processing, re-evaluation support, and transparent result delivery, ensures that published results are accurate, auditable, and defensible.

For institutions still processing results through Excel spreadsheets and manual verification, the move to automated validation isn't just an efficiency gain — it's a risk elimination strategy.

Related Reading

Ready to digitize your evaluation process?

See how MAPLES OSM can transform exam evaluation at your institution.